Here is a scenario that is likely painfully familiar to you.

It is 11

PM on a Tuesday. You have 14 tabs open on your browser. You are staring at yet another Workday application portal, manually typing in the details of the college degree you earned six years ago, even though you just uploaded a PDF of your resume that contains that exact information.You painstakingly craft a cover letter, trying to inject some “personality” while hitting the mandatory keywords. You hit submit.

Two weeks later, you receive an automated “No-Reply” rejection email sent at 3

AM. No feedback. No human interaction. Just a sterile algorithmic dismissal.The job market in 2026 is broken. We are experiencing the rise of “Ghost Jobs”listings kept open by companies merely to harvest data or project an illusion of growth. We are fighting against hyper-aggressive Applicant Tracking Systems (which I outlined how to beat in getting started with ChatGPT).

If you are applying to jobs manually one by one, you are bringing a knife to a drone fight. The companies evaluating you are fully automated. It is time you automate yourself.

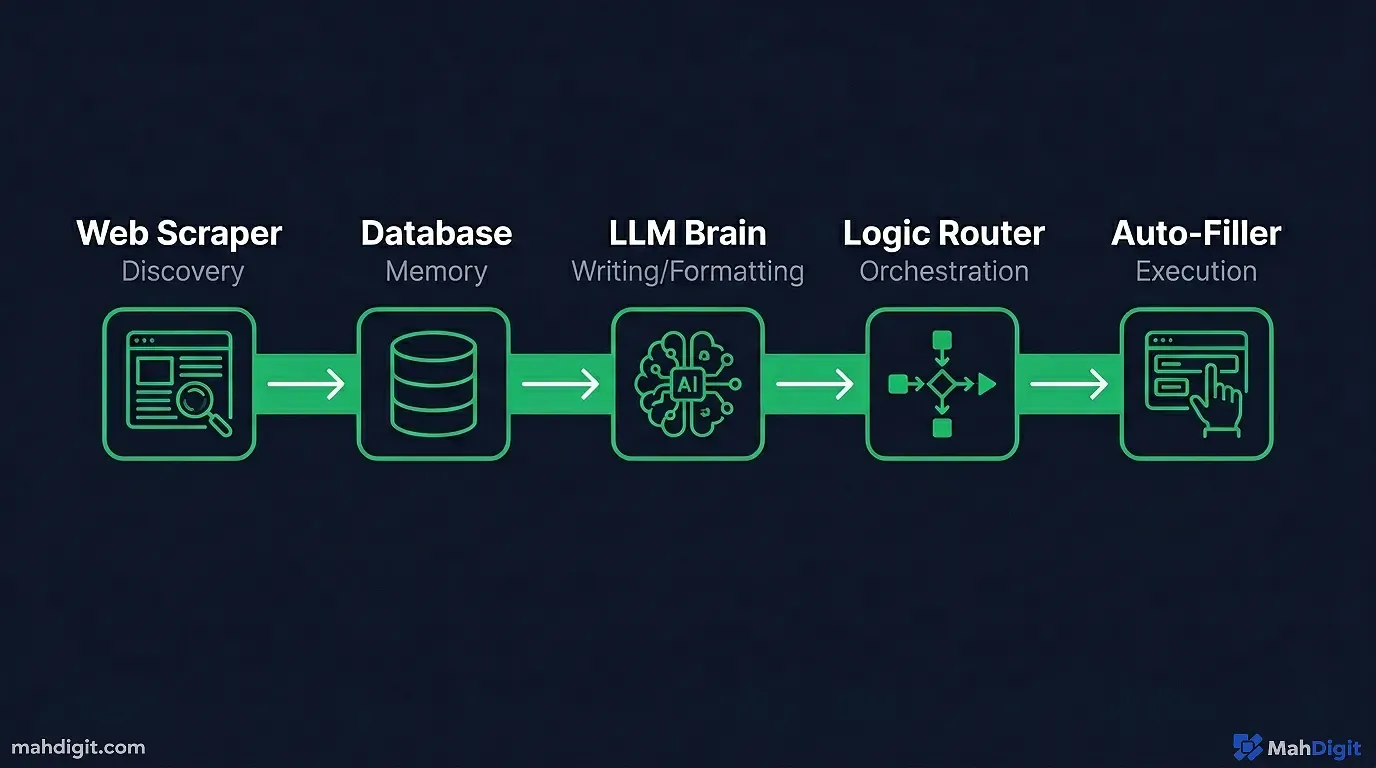

In this comprehensive, 2,500-word guide, we will stitch together data scrapers, Large Language Models (LLMs), and orchestration pipelines to build a machine that finds jobs in your niche, rewrites your resume for each specific job, generates a tailored cover letter, and tracks the applications all while you are asleep.

The Philosophy and Ethics of the Automated Job Hunt

Before diving into the technical architecture, we need to address the ethical and strategic philosophy of automating your job search.

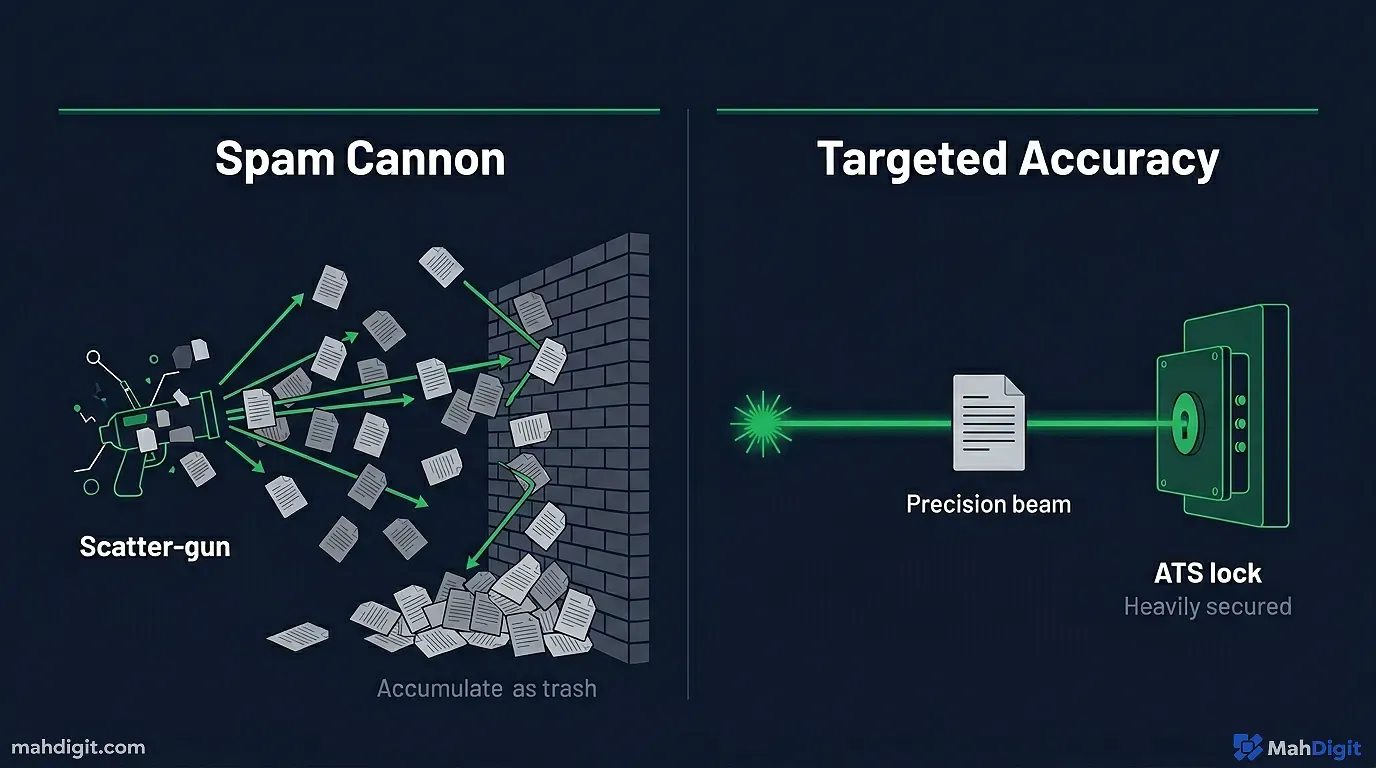

Quality vs. Quantity

There is a large trap in job search automation: The “Spam Cannon.” If you build a bot that blindly applies to 5,000 jobs a day indiscriminately, you will ruin your digital reputation. ATS platforms communicate with each other. If Company X sees you apply for a “Senior DevOps Engineer” role and a “Junior Graphic Designer” role on the same day, you are blacklisted as a spammer.

The goal of our AI Agent is not infinite volume. It is hyper-targeted, high-volume accuracy. We want to apply to 20 to 50 jobs a day that are a perfect match, with application materials that look like they took three hours to write manually.

The Human-in-the-Loop (HITL) Requirement

I strongly advise against “Zero-Click Auto-Apply” for your dream jobs. The architecture we are building today is designed as a “Copilot System.” The AI does the heavy lifting: the finding, the reading, the formatting, and the drafting. But you review the final draft and click the final submit button.

This ensures you don’t accidentally send a cover letter that says, “Dear [Hiring Manager Name], I am passionate about [Company Mission Statement].”

The Ghost Job Epidemic

To understand why we must automate, we have to look objectively at what we are fighting. In late 2025, several major economic studies revealed that nearly 40% of all white-collar job postings on major platforms were “Ghost Jobs.”

These are roles that the company has zero intention of actually filling. Why do they keep them posted?

- The Illusion of Growth: A company actively hiring looks financially healthy to investors and shareholders, even if revenue is stagnant.

- Placating Overworked Employees: When an internal team complains about being understaffed, management points to the open job requisition as “proof” that help is on the way, buying themselves another six months of cheap labor.

- Data Harvesting: Some malicious tech firms use fake job postings simply to harvest thousands of resumes, which they then feed into their own internal LLMs to train their HR parsing algorithms for free.

When 4 out of 10 bullets you fire are guaranteed to hit a brick wall, you cannot afford to manually aim every single shot. You need volume to simply reach the baseline of actual, open positions. Automation is not a luxury; it is a mathematical necessity for survival.

Comparing the Core Tool Stack

Here is a breakdown of the primary tools we will use, why we use them, and what they replace in the traditional manual workflow.

| Tool Name | Core Agentic Function | Replaces This Manual Task | Estimated Cost (2026) |

|---|---|---|---|

| Apify / Scraper | scrapes ATS hidden job boards | Scrolling LinkedIn for 3 hours daily | ~$49/mo (Creator Tier) |

| n8n (Self-Hosted) | Orchestrates the entire data pipeline | Managing Excel tracking sheets | Free (if self-hosted) |

| Claude 3.5 Sonnet | The LLM that rewrites the resume/cover letter | Paying an “Executive Resume Writer” $500 | ~$0.02 per customized resume |

| Airtable | Acts as the relational database/CRM | Forgetting which jobs you applied for | $20/mo (Team Tier) |

| Make.com (Alt) | Easier orchestration if n8n is too technical | Managing Excel tracking sheets | $10.59/mo (Core Tier) |

Now that we understand the strategic philosophy and the tools, here is how the machine works.

Phase 1: Building the “Job Discovery Engine”

The first component of our agent is the Eyes. Your AI needs to know what jobs to look for and where to find them. Relying solely on LinkedIn Job Alerts is a recipe for joining the bottom of a 3,000-applicant pile.

We need to scrape niche job boards and specific company career pages.

The Tooling Options

- Apify: The undisputed king of web scraping in 2026. Apify has pre-built “Actors” (scripts) that can scrape data from LinkedIn, Indeed, Glassdoor, and specific ATS portals like Greenhouse or Lever.

- Browserless / Puppeteer: If you are comfortable with “Vibe Coding” (as discussed in AI tools for developers), you can use tools like Cursor to write a custom headless browser script in 10 minutes.

- No-Code Solutions: Platforms like Careerswift or LoopCV offer out-of-the-box discovery portals if you prefer not to build the scraper logic yourself.

The Architecture Setup (using Make / n8n)

We will use an orchestration platform like n8n (because it is cheaper at scale, handling large data loops easily without bankrupting you on task-runs).

- The Trigger: Set an n8n chron job to run every morning at 6 AM.

- The Fetch: Connect an HTTP request node or the Apify module to search for specific parameters.

- Search String:

"Product Manager" AND ("AI" OR "Machine Learning") AND "Remote" AND "Greenhouse.io" - Why Greenhouse? Searching specifically for the URL strings of popular ATS portals (like greenhouse.io or jobs.lever.co) bypasses the aggregator middle-men and takes you straight to the application source.

- The Filter (Llama 3 or GPT-4o-mini): Do not send all scraped jobs to the next step. Pass the scraped job descriptions through a cheap, fast LLM with a strict prompt:

- “Read this job description. Does it require more than 5 years of experience? Does it require in-office attendance? If YES to either, return ‘REJECT’. If NO, return ‘ACCEPT’ and extract the core desired skills as a JSON array.”

Now, instead of manually scrolling through 100 irrelevant jobs, your database (Airtable or Notion) is populated at 6

AM with 10 well matched jobs, pre-analyzed for your specific requirements.Phase 2: The Resume Tailoring Pipeline (RAG)

Sending the exact same generalist resume to 100 different companies is a strategic failure. Every job application needs a customized resume that highlights the specific experiences relevant to that specific job description.

Doing this manually takes an hour. Our agent will do it in 14 seconds.

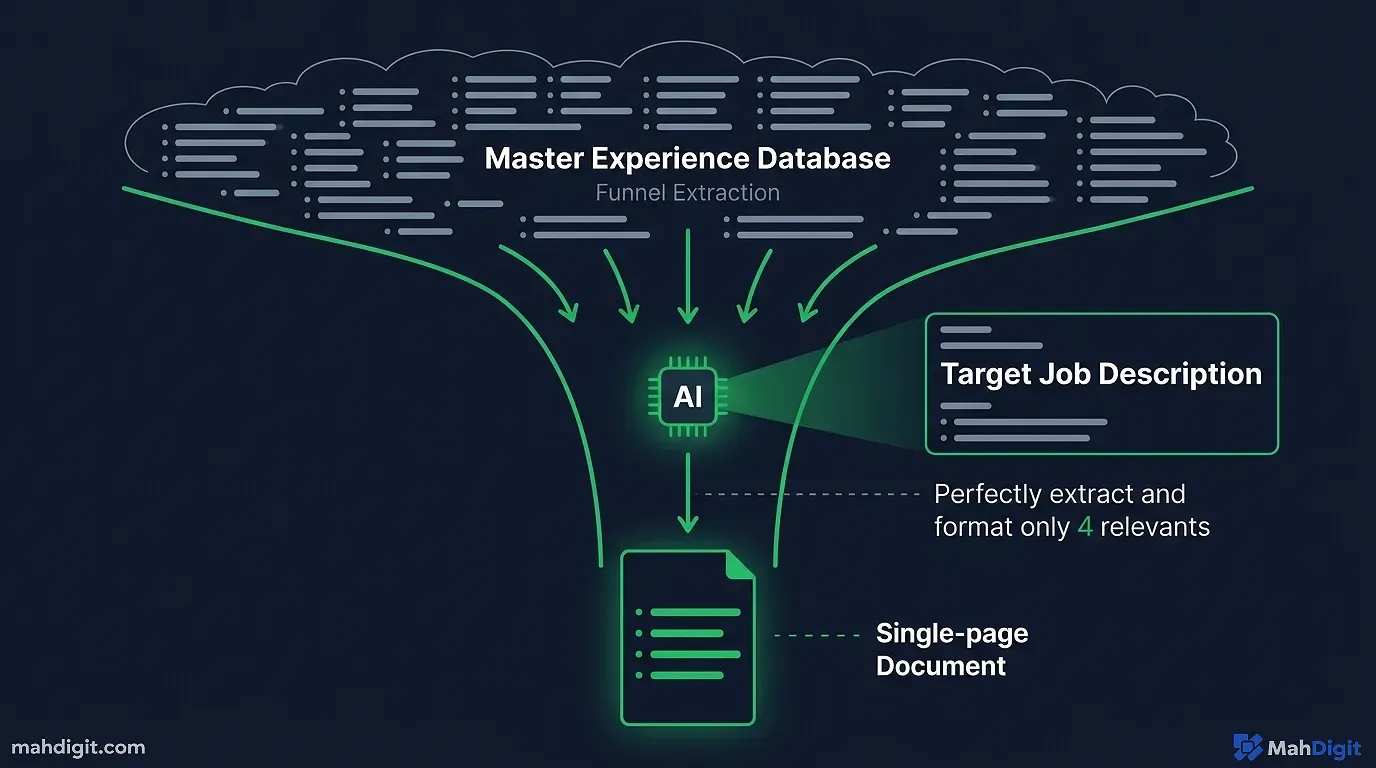

We are going to build a Retrieval-Augmented Generation (RAG) pipeline.

Step 1: Create Your “Master Database”

You cannot just give an AI your one-page resume and ask it to adapt it. You need to give the AI access to every single thing you have ever done professionally.

Create a large, 10-page Google Doc or Notion page. List every project, every metric, every software tool, every success, and every failure from every job you have ever held. Do not worry about formatting. This is the “Brain” of your agent.

Step 2: The Orchestration Logic

In our n8n workflow, once a job passes the “ACCEPT” filter from Phase 1, we move to Phase 2.

- The Injection: We take the raw Job Description and we feed it to an advanced LLM (Claude 3.5 Sonnet is arguably the best model for this specific task due to its large context window and natural writing style, as compared in my best free AI tools guide).

- The Prompt:

- “You are an expert executive resume writer. I am going to provide you with a Target Job Description, and my ‘Master Career Database’. Your task is to extract the 4 most relevant work experiences from my database that prove I can execute the duties in the Job Description. Rewrite those bullet points using the XYZ formula (Accomplished X, as measured by Y, by doing Z). Ensure the keywords from the Job Description are integrated naturally. Return the output in Markdown format.”

- The Output: The LLM generates the text of a well tailored resume.

- The Formatting: We use an API like Bannerbear or a custom HTML-to-PDF node in n8n to instantly render that Markdown text into a clean, single-column, ATS-friendly PDF.

You now have a bespoke, optimized resume generated specifically for Company X, saved directly into a Google Drive folder.

Phase 3: The Cover Letter Architect

Many recruiters claim cover letters are dead. This is only partially true. Generic cover letters are dead.

If you start a letter with, “To whom it may concern, I am writing to express my interest in the position of…” the recruiter will physically recoil.

However, a specific, provocative, and value-driven cover letter is a strong differentiator. Because fewer candidates write them well, the people who do stand out.

We will automate the creation of a “Value-Proposition Letter.”

The “Pain-Point” Prompt

We add another node to our n8n workflow. The AI has already read the job description and extracted your relevant experience. Now we ask it to be creative.

The Core Prompt:

“Read the Target Job Description. Identify the biggest implied ‘pain point’ the company is currently facing that justifies hiring this role. Write a 3-paragraph cover letter. Paragraph 1: A bold hook acknowledging their pain point and why it matters in the current market. Do NOT use the phrase ‘I am writing to apply’. Paragraph 2: A specific example from my Master Career Database where I solved a nearly identical pain point, using hard metrics. Paragraph 3: A confident closing suggesting a brief call to discuss how I can implement a similar solution for them within my first 30 days. Tone: Confident, professional, slightly aggressive, completely devoid of corporate fluff.”

Because we are using an advanced model like Claude 3.5 Sonnet, the output isn’t robotic. It reads like an email sent from a competent consultant directly to the CEO.

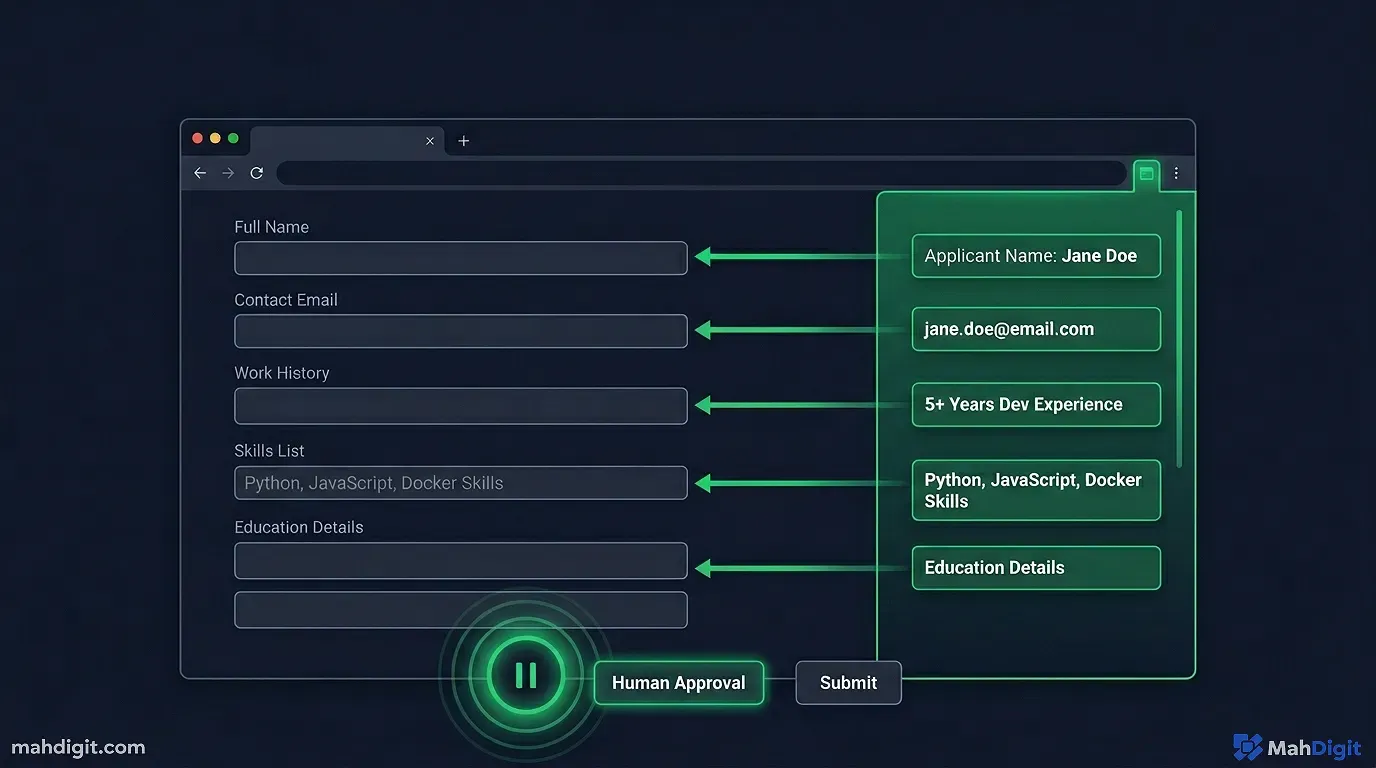

Phase 4: The Auto-Applicant Engine

This is where the architecture gets technically tricky. We have the jobs, the tailored resumes, and the bold cover letters. How do we get them into the system without manually typing our high school graduation date 50 times?

Option A: The Commercial Tools (The Easy Way)

In 2026, the market has exploded with “Auto-Apply” solutions. Tools like LazyApply, AIApply, and Sonara have mapped the DOM elements of all major ATS platforms (Workday, Greenhouse, Lever, Taleo).

You upload your generated documents to their platform, set your parameters, and their bots click the buttons, fill in the text fields, upload the PDFs, and answer the equal-opportunity demographic questions on your behalf.

Pros: easy to set up. Very fast. Cons: It costs money (monthly subscriptions). You lose a degree of the Human-in-the-Loop control. If an application asks a specific custom question (“Provide a Loom video explaining why you love our specific product”), the generic auto-filler will likely fail or upload trash.

Option B: The Orchestrated Autofill (The Hacker Way)

If you want total control, you can build a Chrome Extension automation using something like Automa or a localized Python Selenium script.

When you open a specific job link generated by your n8n workflow, you click a button on your browser extension.

- The extension pings your Airtable database.

- It retrieves the custom Resume PDF URL and the custom Cover Letter text generated specifically for that exact job.

- It utilizes local AI to read the input fields on the screen and maps your master data (Name, Phone, Address, Links) into the correct boxes.

- It pauses.

It stops before submitting. It lets you review the custom questions. You record your 30-second Loom video if needed, attach the bespoke resume, and click “Submit” manually.

This hybrid approach (90% AI automation, 10% manual verification) is the gold standard for high-tier jobs.

Phase 5: The Analytics Dashboard

If you apply to 300 jobs in a month, you cannot track them in your head. When a random recruiter calls you at 2

PM on a Thursday asking for “John,” you need to instantly know which company they represent, what resume they are looking at, and what the core pain point of the job was.Your agent must be a tracking machine.

The Airtable Base

Your entire n8n orchestration ends by dumping data into an Airtable base (or Notion database).

Fields should include:

- Company Name

- Job Title

- Target Job Description (Archived, so if they delete the listing, you still have it).

- URL to the exact Custom Resume PDF submitted.

- The text of the exact Custom Cover Letter submitted.

- Date Applied.

- Status (Applied, Interview 1, Rejected).

The “Interview Prep” Trigger

You can take this one step further. When you move an Airtable status from “Applied” to “Interview 1”, you can trigger a webhook back to n8n.

The AI looks at the company, looks at the custom resume you sent, and generates a new document: An Interview Cheat Sheet. It predicts the 10 most likely behavioral questions they will ask based on the job description, and pre-writes the “STAR” (Situation, Task, Action, Result) method answers using data from your master database. It emails this cheat sheet to you the night before your interview.

The Ethical Dilemma and The Spam Trap

You might be thinking, “If everyone does this, the system is going to collapse.”

Yes. It probably will. The traditional job application portal is dying under the weight of AI-generated spam. HR departments are deploying equally aggressive AI to filter out candidates who look like they are using automated tools.

This brings us right back to our foundational philosophy: Hyper-Targeted Accuracy.

If you use this infrastructure to apply to jobs you are unqualified for, the ATS will reject you instantly. If you use generic, unedited AI prompts for your cover letters, the recruiters using AI-detection software will flag you.

The purpose of this Agent is not to let you be lazy. The purpose of this Agent is to remove the mechanical friction of data entry.

You still have to possess the actual skills required for the job. You still have to perform in the interview. All this machine does is guarantee that your legitimate qualifications actually bypass the digital gatekeepers and land on the desk of a human being.

Key Takeaways

The days of manual data entry in the job hunt are over. By orchestrating a personalized AI agent, you can dramatically increase your application volume without sacrificing an ounce of application quality.

- Scrape, Don’t Scroll: Use Apify or custom scrapers to pull jobs directly from ATS backends (Greenhouse/Lever) based on specific query strings, ignoring the noise of LinkedIn.

- Embrace RAG for Resumes: Create a large “Master Database” of your experience. Train an LLM to surgically extract and rewrite only the bullet points relevant to the specific job description for every application.

- Automate the “Pain Point” Letter: Generic cover letters are dead. Prompt your AI to identify the company’s biggest implied business problem and write a confident, value-driven letter addressing it.

- Maintain the Human-in-the-Loop: Use auto-fillers to do 90% of the work, but always review the final application manually before hitting submit. Never let an AI speak for you blindly.

- Track Everything: Dump every customized resume, cover letter, and job description into an automated database. When the recruiter calls, you need instant context.

Stop letting the companies use superior technology against you. Build the machine, launch the agent, and let the software handle the bureaucracy so you can focus on mastering the actual interview. For the complementary skill set, explore my guide to automating workflows and the Zapier automation tutorial for choosing the right orchestration backbone.